Why your first AI project should save hours, not transform the business

The transformation trap

It usually starts in a board meeting. The CEO asks, "What's our AI strategy?" The room fills with urgency. A transformation plan gets commissioned, consultants get brought in, and workshops fill the calendar.

Eighteen months on, the only deliverable is a slide deck. The consultants are still billing. No one can point to a single hour saved or a customer actually helped. I've watched ambition outpace outcomes when the goal is too broad and the outcomes too vague. Transformation is a worthy long-term aim, but as a first project, it almost always disappoints. The bar for success gets lost somewhere between talk of an AI-enabled future and nothing you can measure.

There's a better way. I start by picking a single workflow and focusing on saving real hours. I measure the result inside eight weeks, then take that number straight to the board.

The discipline this borrows from

I've spent over a decade running experimentation programmes. At RNLI, I helped lift donation journey conversion by 28 percent. That single number convinced a sceptical board to fund the full CRO programme. Evidence did the work that no slide deck could.

Moving from experimentation to AI, the same discipline applies: design the test, measure the gap, score the result, and decide whether to scale. The context is new, but the underlying approach hasn't changed.

This is why hours saved is the right first metric for AI. It's the experimentation playbook applied to a new tool. Define the baseline, run the trial, score the outcome, and expand from evidence.

Why hours saved survives a finance meeting

Every business already understands time. When AI gives hours back to a workflow, you have a number you can take straight to a finance director. The baseline is clear. The improvement is clear. Both get measured the same way.

Transformation projects fail because the metrics are vague. "Improved customer experience." "Faster decisions." "AI-augmented productivity." None of those survive a serious commercial review. None of them help leadership decide whether to fund the next AI project or walk away.

Hours saved is different. The baseline is measurable, because everyone knows how long the task took before. The impact is testable, because shadowing the workflow for two weeks and get the number. The result compounds, because those hours come back into the business to be redeployed.

If you can't show hours saved on a first project, you're not ready for a bigger one. Evidence beats instinct every time.

What makes a good first project

A good first AI project meets five criteria. Miss any one, and the work gets much harder to defend.

Weekly frequency. If the task only runs once a quarter, you won't learn fast enough to fix the AI's mistakes before the next cycle comes round.

High reversibility. Mistakes should cost a rework, not a refund. Internal tasks with human review before anything leaves the building are safer than customer-facing ones.

Low judgement load. The right answer shouldn't depend on context only insiders know. AI is good at patterns. People are good at judgement.

Clear baseline. You should be able to measure how long the task takes today without commissioning a workshop. If you need a project plan just to estimate the baseline, the workflow is too tangled for a first project.

One owner. One person accountable for the outcome. Cross-functional projects multiply meetings and dilute responsibility. Save those for later.

Candidates that meet all five:

Weekly summary emails drafted from meeting transcripts

First-draft proposals built from a standard template

Ticket triage routed into a fixed set of categories

Expense receipts coded against the accounting chart

Candidates to skip on a first project:

Customer-facing chatbots (judgement load and reversibility risk)

Forecasting and planning (judgement load too high)

Sales discovery (too low frequency)

The shortlist is always shorter than boards expect. That's the point. Smaller firms without a dedicated data team can't afford to overshoot on the first project.

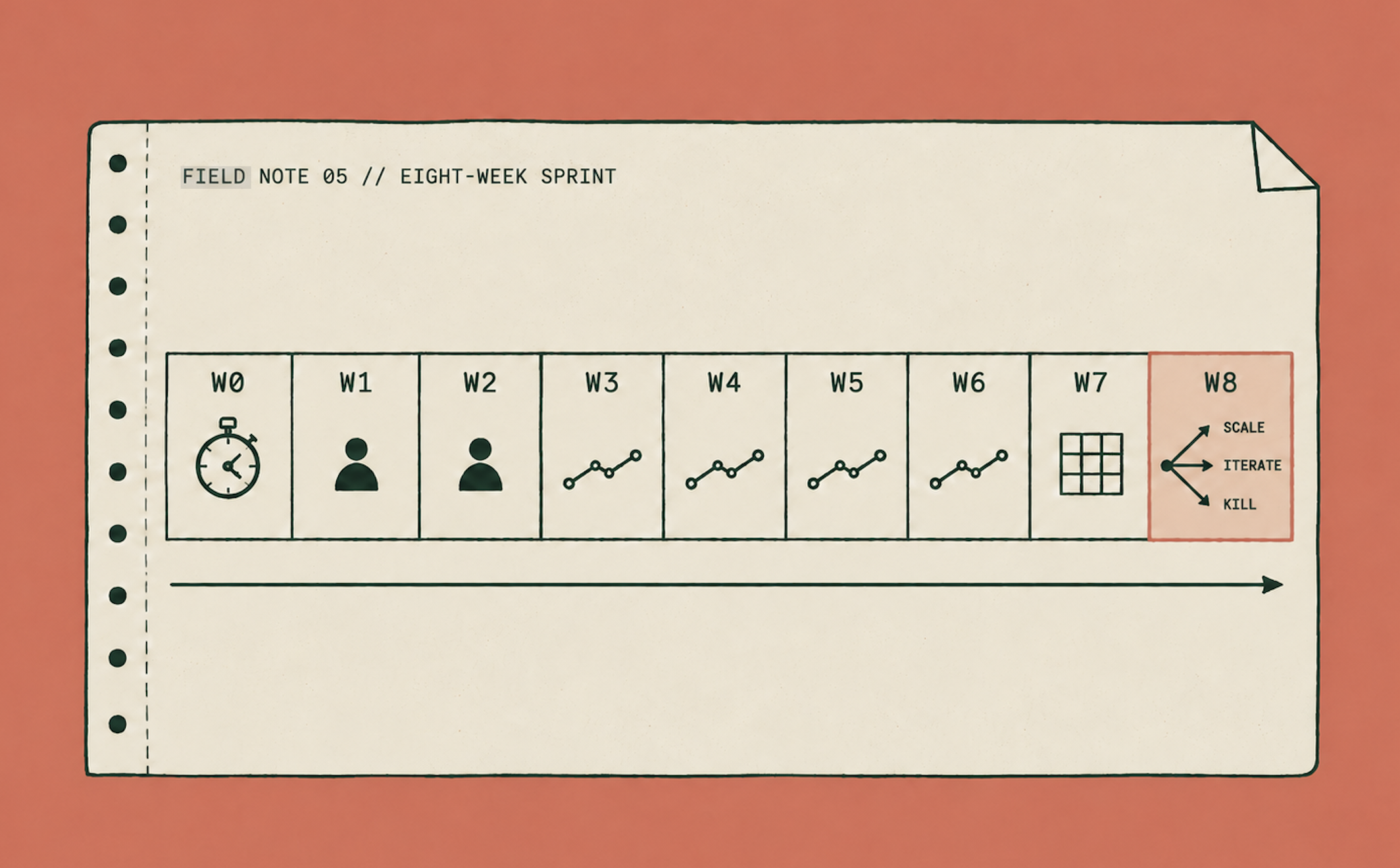

The eight-week template

This schedule fits inside a quarter and gives the business a defensible result by the end of it.

Week 0. Define the workflow on a single page. Log the baseline with a stopwatch, not a guess. Choose your tool.

Weeks 1–2. Set up. Pilot with one user.

Weeks 3–6. Run live. Measure time on every run. Track quality escapes, the moments the AI got it wrong and a human had to step in.

Week 7. Score the workflow against six dimensions: adoption, time saved, quality, risk, defensibility, and gap-to-target.

Week 8. Decide. Scale, iterate, or kill.

Budget: one person's two hours per week, plus tool costs. Usually £200–£500 per month, all in. If your first AI project needs a procurement review, it's too big.

The deliverable is a single, defensible sentence. "This workflow now takes X hours per week, down from Y, with a quality score of Z." That sentence earns permission for the next project. Without it, the second project stands on the same shaky ground as the first.

What scaling actually looks like

Scaling isn't about bigger projects. It's about more workflows, each one run with the same discipline.

After two or three completed first projects, I've seen businesses gain something new. A scorecard of real numbers, not forecasts. A team that has learned, in context, what AI can and can't do. Permission from leadership to invest more, earned by evidence rather than promised on a slide.

That's what an AI strategy looks like in practice. Small projects shipped on schedule. Scored against a consistent rubric. Stacked into something that compounds.

Transformation, if it happens at all, is the sum of twenty completed first projects. It doesn't get bolted on. The firms that lead in AI by 2027 will be the ones with the most workflows shipped and the cleanest numbers to show for them.

Ship before you scale

The most defensible AI leader in any industry next year will be the one whose team has shipped the most workflows and is ready to do it again next quarter.

Ship your first AI project by the end of this quarter. Measure it. Show the number. Earn permission for the next one by doing this one well.

Ship before you scale. It's the only AI strategy that survives a finance meeting.

The AI Roadmap Sprint is how I work alongside firms doing exactly this: a 3–4 week engagement to pick the first workflow, score it, and ship it.