From Experimentation to AI: Why CRO People Are the Best AI Adopters

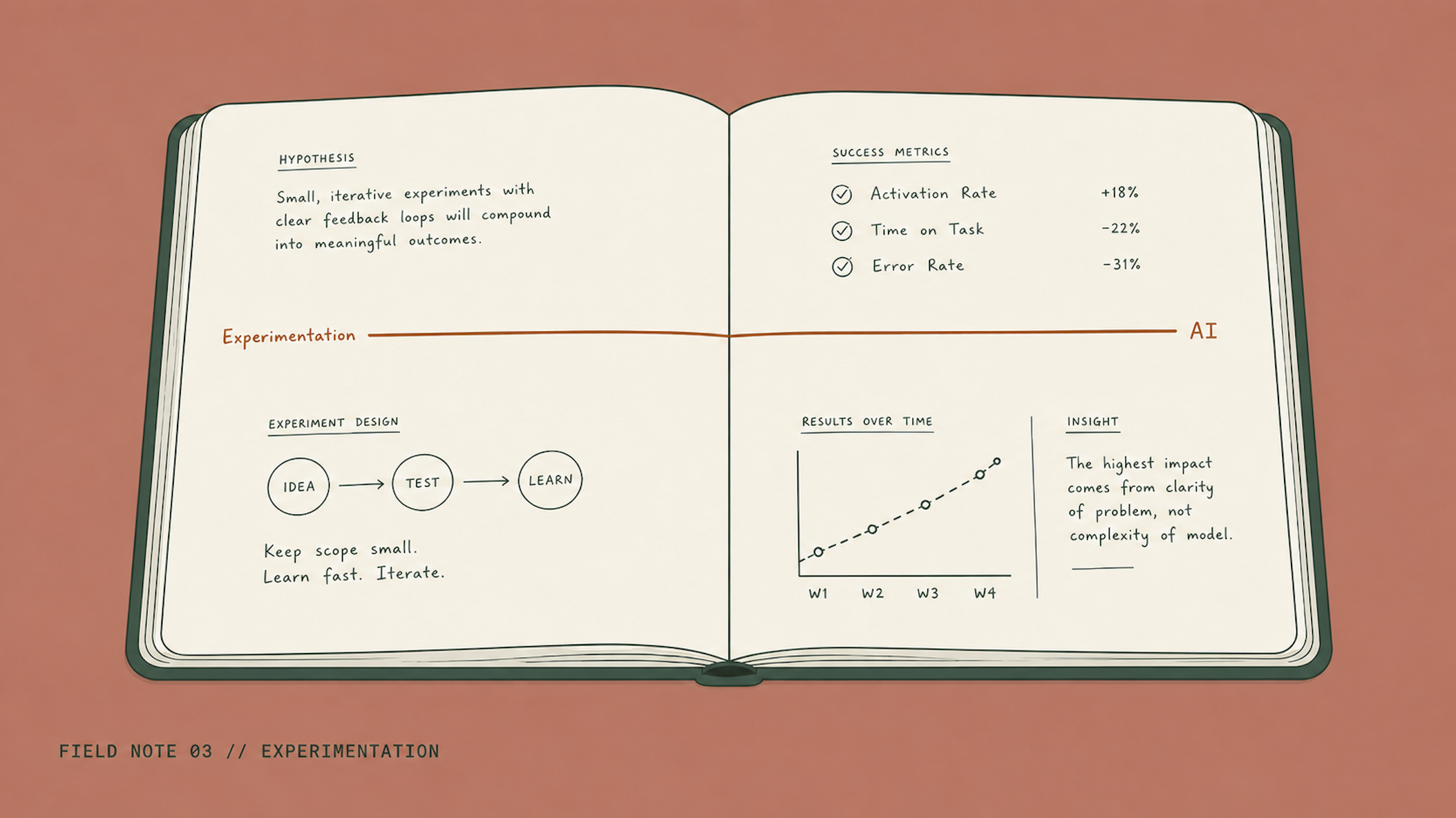

TL;DR: Most AI projects fail because organisations treat AI as a technology problem when it is a discipline problem. The same evidence-based working pattern that powers experimentation programmes (clear hypotheses, defined success metrics, controlled rollouts, feedback loops, cross-functional translation) is exactly what AI projects need. CRO and experimentation practitioners already have that muscle. That makes them, in my experience, the best AI adopters in the room.

Why do most AI projects fail?

Most AI projects fail because nobody agreed in writing what success looked like before the work started. The headline figures vary. MIT's GenAI Divide report (August 2025) estimates 95% of generative AI pilots fail to deliver measurable returns. RAND puts the broader AI project failure rate at around 80%. Industry analysis of recent Deloitte and adjacent surveys puts the share of companies that abandoned at least one AI initiative in 2025 at 42%, with average sunk cost of around $7.2 million per abandoned project.

I have seen this pattern before. Not in AI. In experimentation, fifteen years ago.

Teams would build something. Everyone would agree it looked better. The measurement would come in weeks later, and the answer would be nothing like what the room expected. The failures were not technical. They were discipline failures. No clear hypothesis. No success criteria. No controlled environment. No feedback loop.

The tools are louder now. The budgets are bigger. The gap is the same.

What does a decade of experimentation actually teach you?

Experimentation teaches you how to make decisions with evidence rather than opinion. The statistical work is the smallest part of it. The real skill is the habit of asking three questions before you build anything: are we solving the right problem, can we prove this works before we commit, and what is the fastest way to find out what is actually going to land.

Most people hear 'experimentation' and think A/B testing. That is the tip of it.

A decade of running experimentation programmes for Vitality. LV=, RNLI, NatWest, and Farrow & Ball teaches you UX, psychology, analytics, prioritisation, and how to talk to engineers, designers, and commercial leads in the same conversation.

The contexts changed wildly across charity, banking, e-commerce, and automotive. The pattern never did. Find the biggest gap, design a test that could prove the idea wrong, let the data decide.

That discipline does not belong to experimentation. It belongs to anyone trying to make decisions with evidence instead of opinions. Which is exactly what AI adoption requires, and almost nobody has.

Which experimentation skills transfer directly to AI?

Five experimentation skills transfer directly into AI work: hypothesis framing, defined success metrics, controlled environments, feedback loops, and cross-functional translation. These are not generic transferable skills. They are the specific muscles an AI adoption programme asks you to flex every day.

Hypothesis framing. Every experiment starts with a sentence. 'We believe [this change] will [cause this outcome] because [this reason].' AI projects that skip this sentence cannot tell whether they have succeeded.

Defining success before you start. Experimentation demands pre-registered success metrics. Most AI pilots define success after they have seen the results. That is not measurement. It is rationalisation.

Controlled environments. Testing teaches you to isolate variables. AI implementations that change five things at once cannot attribute outcomes to any of them. If a new AI workflow is working, you need to know why. If it is failing, you need to know what.

Feedback loops. Experimentation builds measurement in by design. The best AI systems do the same. Not as an afterthought once production is running, but as part of the architecture from day one. Lifting the RNLI donation journey conversion rate by +28% was not a moment of design genius. It was the result of a measurement loop running long enough to surface what worked.

Cross-functional translation. A decade of experimentation teaches you to speak engineering, design, analytics, and commercial in the same conversation. AI adoption needs exactly that. The prompt engineer who cannot explain the P&L impact never gets the business case approved.

If you have already spent ten years building these muscles, you have an unfair advantage.

Why do most AI adopters get this wrong?

Most AI adopters get this wrong because they treat AI adoption as a technology problem rather than a discipline problem. Pick the right model. Write better prompts. Buy the right tools. Run the right pilots. The 95% failure rate says the problem is not technology. It is the absence of an evidence-based way of working.

You would not ship a website redesign without measuring whether it performed better than the version it replaced. You would not rewrite pricing logic without running it against the old version first.

And yet AI workflows get shipped every day with no baseline, no success metric, no control, and no way to tell whether they have moved a number.

This is where experimentation practitioners have the edge. They have spent years building the exact muscle AI adoption demands, in an environment where stakeholders would not let them ship without it.

How does experimentation translate into AI consulting?

Moving from experimentation to AI was not a pivot. It was a straight line. The questions I ask when I design AI systems are the same ones I asked when I designed experimentation programmes. What problem are we solving? What would success look like? How will we know? What is the fastest way to find out if we are wrong? What do we do when the evidence disagrees with the idea?

The context is new. The discipline is unchanged.

That is why CRO and experimentation practitioners are, in my experience, the best AI adopters. Not because they already understand the tech (some do not) but because they already understand the way of working. Which is the part most organisations are missing.

What should you do next?

If you have an experimentation background, you already have the most valuable skill in AI: the discipline of knowing whether what you have built is actually working.

If you are hiring for AI roles, look past technical credentials. The people who can frame hypotheses, define success, and build feedback loops will outperform prompt engineers every time.

If you are about to start your next AI project, the most important thing you can do is not write a brief. Write a hypothesis. Evidence over instinct. By design, not random.

The longer story behind how experimentation became the foundation for the way I work with AI now lives on the About page.

Frequently asked questions

Why do most AI projects fail?

Most AI projects fail because organisations skip the discipline questions. No clear hypothesis. No defined success metric. No controlled rollout. No feedback loop. Technology is rarely the binding constraint. The absence of an evidence-based way of working is.

What is the most transferable skill from CRO and experimentation to AI?

The most transferable skill is hypothesis framing: stating in writing what you believe will happen, why, and how you will know. Every other discipline (defining metrics, controlling variables, building feedback loops) follows from that habit.

What is the difference between a prompt engineer and an experimentation-trained AI lead?

A prompt engineer optimises the input to a model. An experimentation-trained AI lead designs the system around the model: the hypothesis, the success metric, the controlled rollout, the feedback loop, and the case for stopping if the evidence does not land. The first is a craft. The second is a discipline.

How do I tell if my AI project is actually working?

Compare the outcome to a success metric you wrote down before the project started. If you cannot point at the metric, or the metric was written after the results came in, you do not have evidence. You have a story. Evidence over instinct.