From Mega-Prompt to Multi-Agent.

“The real shift with AI isn’t writing better prompts. It’s about designing the architecture around the model, breaking work into focused parts with clear interfaces so the system remains performant, legible, and maintainable. That’s not a new skill; it’s an experimentation discipline (decompose, isolate, test, iterate) applied to a new surface. The long-term advantage isn’t a bigger model; it’s the engineering instinct to build rails around a right one for the task.”

A single AI system prompt stops scaling somewhere around 5,000-8,000 tokens. Mine hit 8,000 before it broke. Instructions started bleeding into each other, and softening one section quietly disabled another. The fix wasn't a cleverer prompt. It was breaking the prompt apart into specialised agents.

Each week, I added something new. Another skill, another guardrail, a tweak to fix the tone that felt off last Tuesday. The more I added, the less it performed.

The break was subtle. I softened the writing section to take the edge off the sales language, and suddenly the review logic stopped catching issues I knew were there. Instructions started bleeding into each other. What I had was no longer a prompt; it was a system with no real structure.

If you’ve built anything with AI, you’ve probably seen this happen. At a certain point, the more you loaded in, the worse it got.

01 - FOUNDATIONS

Why does one big system prompt stop working?

A context window isn’t a bottomless container. It’s a finite budget you spend with every instruction.

Every instruction, every tool description, every example, each one costs tokens on every turn. A massive system prompt burns through attention before the user even starts. The model ends up with less room to think, not more.

The answer isn’t a cleverer prompt. It’s a smaller one, repeated. Specialised agents, each handling a focused job.

This is the shift: from prompt to architecture. One planner can’t also be the best writer, researcher, and reviewer. The work needs to be split into sub-agents and skills, each with a narrow remit. The context window isn’t where instructions live. It’s where the thinking happens. Protect it.

02 - REBUILT

What does a multi-agent AI workflow look like in practice?

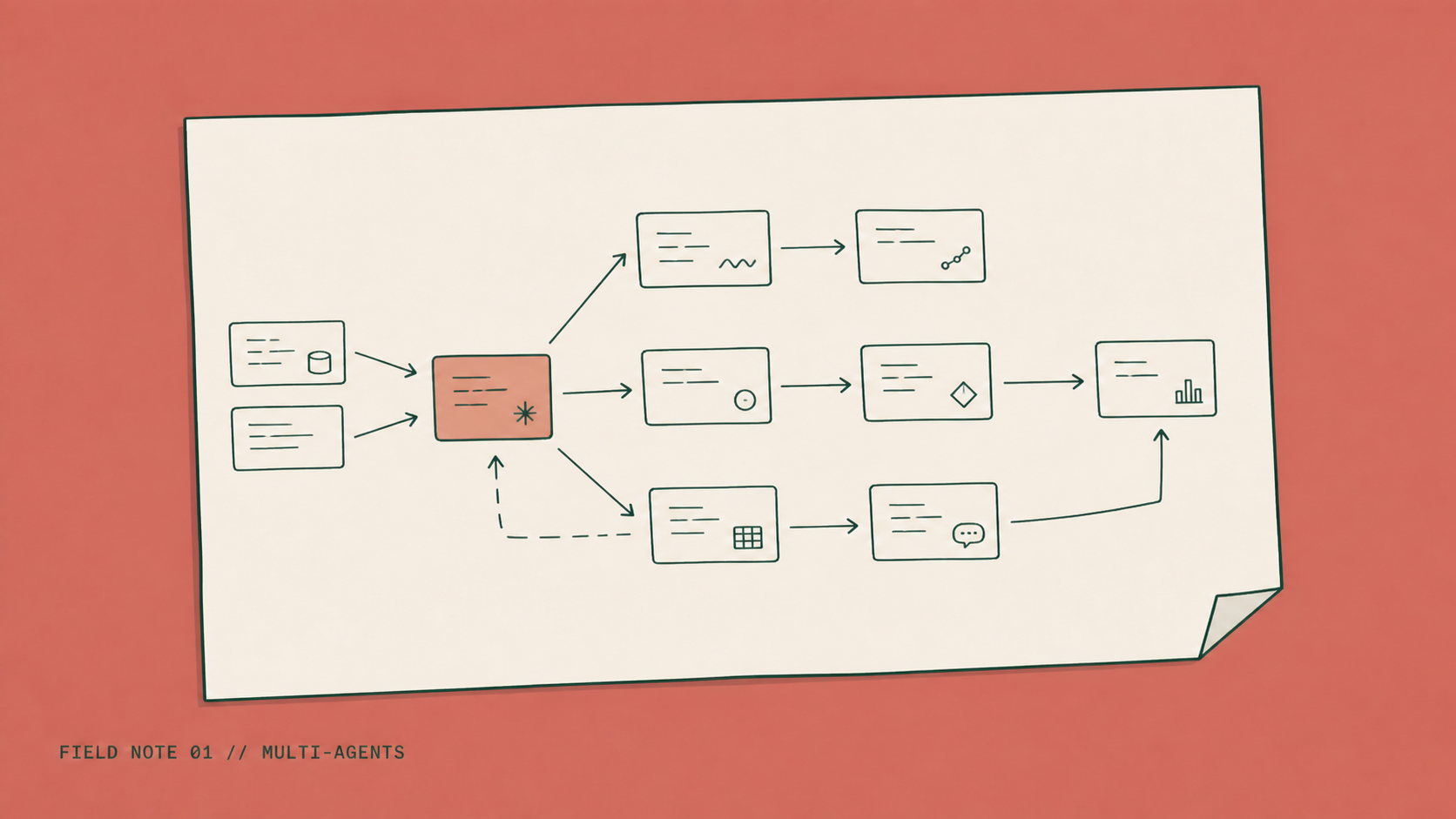

The replacement was four agents and eleven skills. A planner decomposes the work, a researcher gathers evidence, a writer drafts in voice, a reviewer audits against criteria. Skills load on demand. The whole architecture fits on a napkin.

Four agents, each with its own system prompt and its own narrow job:

A planner that decomposes the work and decides who does what

A researcherthat gathers evidence and returns clean findings

A writer that drafts in a defined voice and register

A reviewerthat audits output against explicit criteria

Alongside the agents, I built eleven skills—each a short markdown file that loads only when needed. One to write for steve-quinlan.com. One to run a GEO audit. One to hand off a session so the next can pick up cleanly. The planner never touches them directly. It decides which subagent needs which skill and delegates.

The flow is simple. The planner plans. The right subagent gets the task. Skills load on demand. Each agent returns a clean result. Nothing tries to hold everything at once.

The difference is clear in the numbers. My main system prompt dropped from 8,000 tokens to under 1,500. Output quality improved, not because the model changed, but because each agent was focused and had the right context. Where it still breaks is at the handoff. If the planner gives a lazy instruction, the result downstream is lazy too. That’s a design problem, not a model problem.

03 - ARCHITECTURE

Why does AI architecture matter more than the model you choose?

Three gains make this worth the effort: cost, quality, and maintainability.

Cost comes down because each call carries a smaller, focused prompt. The organisational knowledge tax, paying for every instruction on every turn, whether it’s needed or not, disappears.

Quality goes up because specialised context produces specialised output. The writer agent doesn’t need to know how to query a database. The reviewer doesn’t need tone-of-voice instructions. Each one sees only what it needs.

Maintainability is the quiet win. When a behaviour needs to change, I change one skill—not the whole system. The change is testable, isolated, and easy to recover. If something breaks, it’s really clear where.

Here’s the deeper point: the model isn’t the product. The system you build around it is.

Choosing a bigger model is the easy lever. Building the rails around a smaller one is harder and better. Evidence beats instinct. Architecture is the discipline. Everyone prompts. Few design.

04 - EXPERIMENTATION THINKING

How does experimentation thinking apply to AI architecture?

A decade of running experimentation programmes taught me to break big problems into testable units. Define the hypothesis. Isolate the variable. Observe what happens. Iterate.

Multi-agent architecture is the same experimentation discipline, just on a new surface. One responsibility per agent. One job per skill. One clear interface between them. Test the parts. Trust the whole.

The context is new. The discipline is unchanged.

This is what I mean by 'by design.' Not random. Not accidental. Engineered so the humans, both users and maintainers, stay at the centre. The tools have changed. The instinct to break the problem down, test the pieces, and let evidence settle the argument hasn’t.

05 - WHERE TO START

How do you start moving from a mega-prompt to multi-agent?

If the prompt is growing, that’s the signal.

Pick the one responsibility that stands apart, reviewing, writing, researching, and pull it into its own agent or skill. Measure what changes: cost, quality, time to fix when something breaks.

Expand by design, not by accident. The only AI systems worth keeping are the ones a human can still maintain.

“Most of what I write here comes out of real client work. If you’ve read this and thought “that sounds like us,” I’d genuinely like to hear about it. The best conversations I have start with someone describing the messy version of the problem. Get in touch and let’s talk it through.”

06 - QUESTIONS

FAQs

Q.01

How big is too big for a system prompt?

The signal isn't a token count, it's interference: when changing one section breaks another. I felt mine break around 8,000 tokens, but I've seen 3,000-token prompts tangle the same way. If softening the writing instructions quietly disables the reviewer, the prompt is already doing two jobs that should be separate. That's the threshold — not the size.

Q.02

What's the difference between an agent and a skill?

An agent is a worker with its own system prompt and persistent role. Planner, researcher, writer, reviewer. A skill is a short markdown instruction file that loads on demand for a specific task. Agents persist; skills appear when needed. The planner doesn't carry every skill in its context, it loads only the one the job requires.

Q.03

Doesn't running multiple agents cost more than one big prompt?

Usually less. The architecture looks expensive because you're making more calls, but each call carries a much smaller prompt. My planner dropped from 8,000 tokens to under 1,500, roughly 80% off the input bill before the actual work even starts. The hidden cost in mega-prompt setups is paying for instructions that aren't being used on a given turn.

Q.04

What's the simplest first move if my prompt is getting heavy?

Pull out the one responsibility that stands apart. Reviewing is usually the easiest split, different criteria, different tone, different success conditions from the writing or research that produced the output. Make the reviewer its own agent or skill, measure what changes (cost, error rate, time to fix when something breaks), then expand from there.

Q.05

Do I need an engineering team to build a multi-agent setup?

No. The architecture I described, four agents, eleven skills, is markdown files and configuration, not custom code. If you can write a clear instruction document, you can build a subagent. The harder part isn't engineering. It's deciding what each agent's job actually is and resisting the urge to give any one of them more than one job.